Key Takeaway

- A neural network is a function made of layers of neurons

- Each neuron computes a weighted sum of its inputs, adds a bias, and passes the result through an activation function

- The network learns by adjusting those weights and biases — nothing more

This chapter answers:

What is a neural network, structurally and mathematically?

Neural networks ⊂ Machine Learning ⊂ AI

Introduce

Neural Network is a mathematical model that learns patterns from data to solve complex tasks.

- A neural network can be viewed as a function that takes in numbers as input and produces numbers as output.

- During training, it automatically adjusts its weights and biases so that its predictions become more accurate over time.

- Common applications include:

- image recognition

- speech recognition

- language translation

The Challenge of Image Recognition

- Background:

- Recognizing handwritten digits, such as

3, is easy for humans. - However, to a computer, such an image is simply a

28 × 28 grid of pixels.

- Recognizing handwritten digits, such as

- Challenge:

- Because handwriting styles vary greatly from person to person, it is very hard to write a fixed set of if-else rules that can correctly identify every digit.

- Solution:

- Neural networks solve this by learning through multiple layers of abstraction, breaking complex visual patterns into simpler features, much like the human brain does.

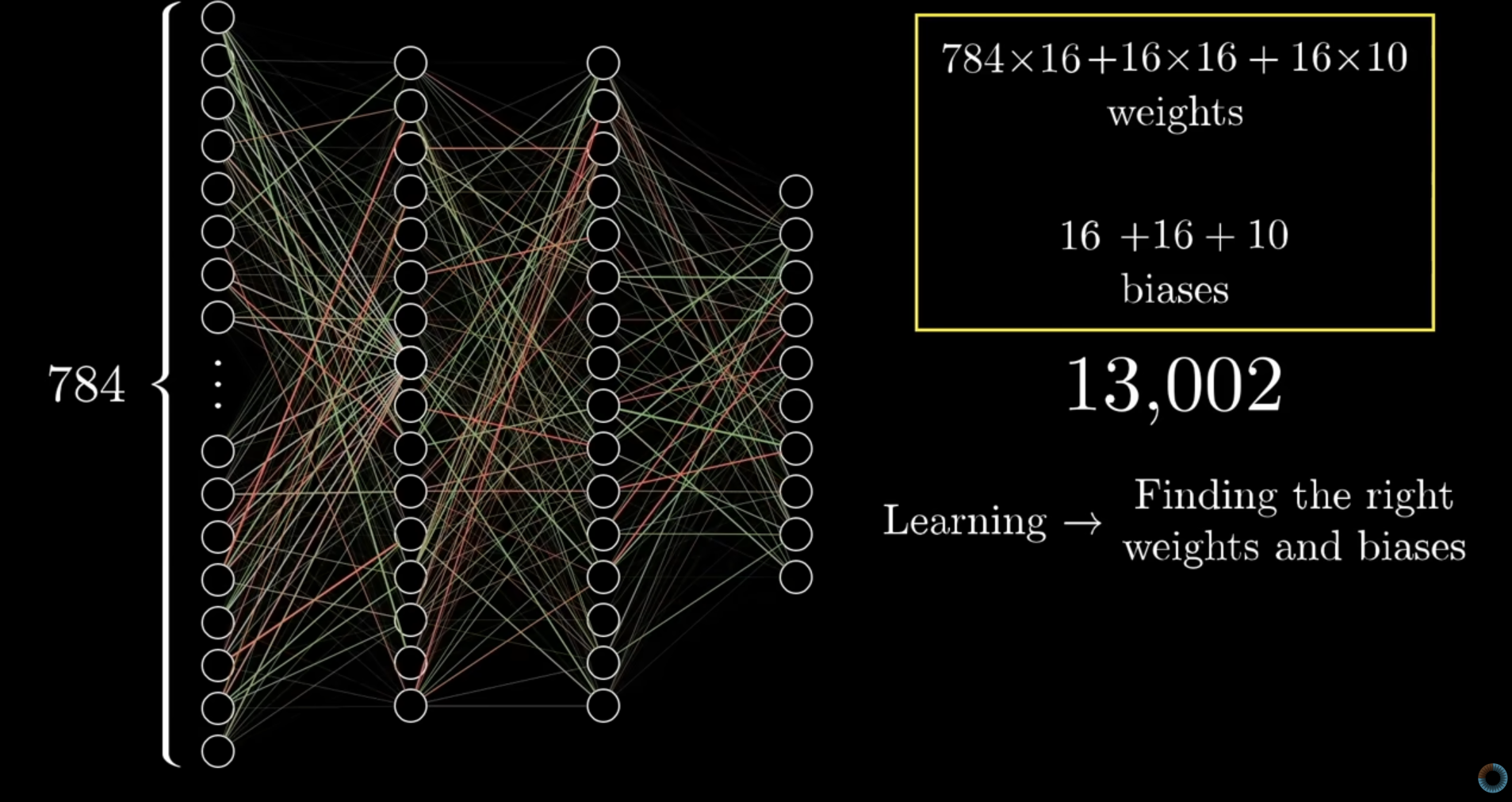

Structure of the Network

-

A neural network usually has:

- Input layer:

- Each input unit represents one pixel value.

- Hidden layer(s):

- Hidden layers process the input step by step and learn useful features.

- Output layer:

- If the task is digit recognition, the output layer often has 10 neurons, representing digits 0 to 9.

- Input layer:

-

Weight and Biases:

- Each layer has its own weights and biases, but the whole network usually has one final cost.

-

Cost:

- A neural network usually produces one final cost for a training example. This cost is computed from the final output, and the final output depends on the weights and biases of every layer.

What is neuron?

Simple explanation:A neuron holds a number, output val called activation between 0 and 1.

More precisely, a neuron is a small computational unit that computes and outputs a value.

- A neuron computes weighted sum, adds a bias, and then applies an activation function.

- During training, the network adjusts its weights and biases to improve its predictions.

- The output of a neuron is called its activation

- A high activation means the neuron is strongly responding.

- A low activation means it is not responding much.

Matrix Form of a Neural Network Layer

Each neuron is still computed individually. The matrix formula is just a compact way to write all neurons in the layer at once.

- For one neuron separately:

- linear combination before activation:

- It takes as input and produces the final neuron output:

- For a whole layer:

What does each symbol mean?

1. a activation

- Activation vector of layer .

- It contains the outputs of all neurons in the current layer.

For example:

- if layer has 3 neurons:

- This means the three neurons in the current layer output

0.2,0.7, and0.1.

2. W weight

- weight matrix connecting layer to layer .

- weight = importance of an input

- If the current layer has 3 neurons and the next layer has 2 neurons,

- 2 rows → one row for each neuron in the next layer

- 3 columns → one column for each neuron in the current layer

Example:

3. b bias

- bias vector for the next layer.

- Bias is an extra number added at the end of the weighted sum.

- If the next layer has 2 neurons:

- Each neuron in the next layer has its own bias.

4. σ activation function

- Activation function, such as:

- sigmoid

- ReLU: stands for Rectified Linear Unit.

- It is applied element-wise to the vector.

- For example, if , then

Sigmoid and ReLU (activation functions)

| Function | Formula | Output range | Meaning |

|---|---|---|---|

| Sigmoid | squash into 0 to 1 | ||

| ReLU | keep positive, cut off negative |

input → weighted sum + bias → activation function → output

More precisely: nonlinear activation function

Activation functions add nonlinearity, so the network can learn complex patterns.

- Sigmoid

- It squashes the pre-activation value into a value between 0 and 1.

- ReLU

- if

x < 0, output = 0 - if

x ≥ 0, output = x

Why Are Layers Useful?

- Layers help the network turn low-level features into high-level features.

- the network can gradually transform the representation, making the final classification easier.

- In image recognition, earlier layers may capture edges or curves, while later layers combine them into shapes and finally into whole digits.

- Pixels → edges/curves → shapes → digit

Example

Example 1: Layer to Layer Calculate

Each neuron in the next layer computes a weighted sum of all activations from the previous layer, adds a bias, and then applies an activation function.

3 neurons layer transfer to 2 nuerons layer

- Layer to layer:

- Each connection between neurons has its own weight.

- Each neuron has its own bias.

Suppose

-

Suppose the current layer has 3 neurons:

-

Weight matrix:

-

Bias vector:

Step 1: Compute the linear part

-

-

First do the matrix multiplication:

- First row:

- Second row:

- So:

-

Now add the bias:

Step 2: Apply the activation function

- If we use sigmoid:

- That means:

- Sigmoid formula:

- Approximate values:

- So:

How to view each neuron separately

First neuron in the next layer

- It uses the first row of the weight matrix:

Second neuron in the next layer

- It uses the second row of the weight matrix:

Example 2: Weight

- Learning → finding the right weights and biases