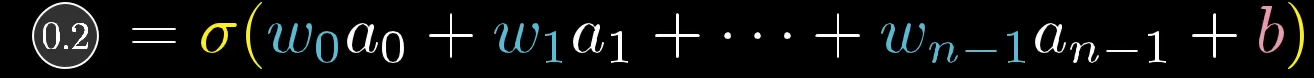

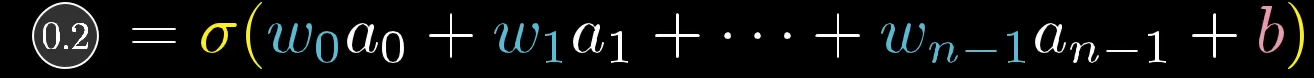

- 0.2:

- This is the **output (activation)** of the current neuron. It is the final value produced by this neuron after all the calculations are done.

- **$\sigma$**: sigma

- This is the **activation function**.

- In this picture, it usually means the sigmoid function, which takes the value inside the parentheses and squashes it into a number between 0 and 1.

- **$w_0, w_1, \dots, w_{n-1}$ (weights)**:

- These are the **weights** connected to each input from the previous layer.

- Each weight tells us how important that input is for the current neuron.

- **$a_0, a_1, \dots, a_{n-1}$**:

- These are the outputs from the previous layer.

- The current neuron uses all of them as inputs.

- **$b$ (bias)**:

- This is the **bias term**. It shifts the weighted sum before applying the activation function, which helps the neuron learn more flexibly.

- What the whole picture means:

- First, the neuron computes a weighted sum of all inputs plus bias: z=w0a0+w1a1+⋯+wn−1an−1+b

- Then it applies the sigmoid function: a=σ(z)

- and the final output here is 0.2.